Look closely at the plant photos in Sanaz Mazinani’s new art exhibit and you’ll find something’s not quite right.

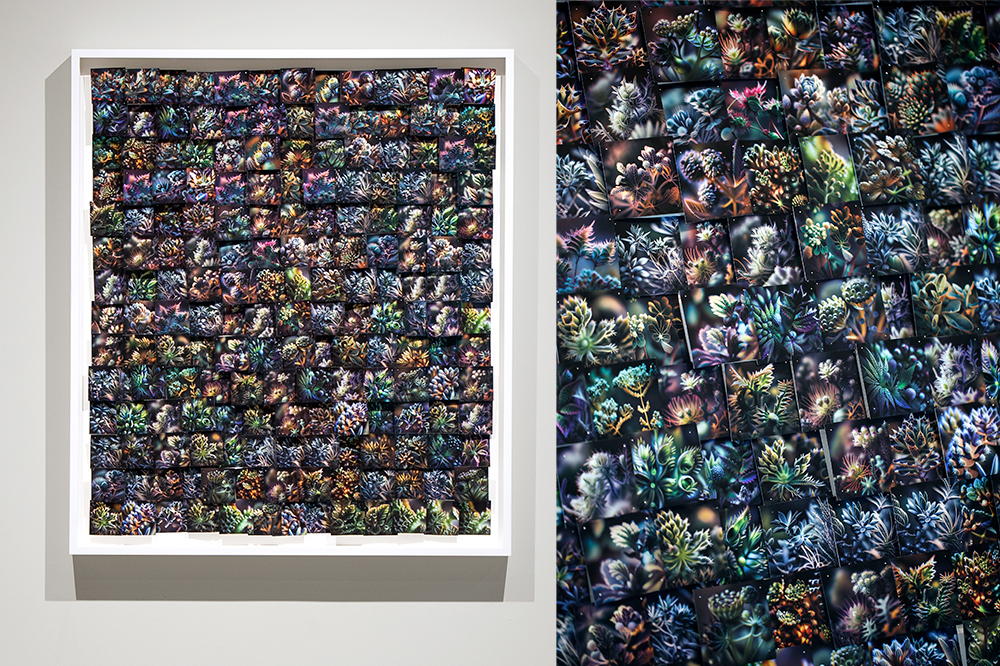

Mazinani’s collages cascade with pictures of flowers, cactuses and other vegetation, but among them are dreamier plants, ones with smeared edges and neon hues. They’re almost believable, but they radiate the subtle fuzzy glow people are beginning to recognize as the hallmark of AI-generated art. Mazinani made these pieces by testing whether AI can sense the work of its own kind too.

“I did it because I wanted to understand the technology,” says Mazinani, assistant professor of studio art in the department of arts, culture and media at U of T Scarborough. “In each piece, I tried to have a hypothesis.”

To create An Impossible Perspective — on until Nov. 11 at the Stephen Bulger Gallery — Mazinani and computer-science student Millan Singh Khurana (HBA 2022 UTSC) developed their own AI and taught it a series of rules to distinguish real and AI-generated images. Mazinani, a seasoned photographer, snapped 12,000 photos of plants and made 5,000 fictional ones by feeding prompts to image generators DALLE-2 and Midjourney. They then told the AI to rank the images from real to fake, with different twists each time they asked, and fixed the photos to the canvases accordingly.

In one of Mazinani’s works, the AI attempted (and largely failed) to sort images Mazinani took of real and plastic plants. Other pieces had the AI create its own fictional plants by combining real and AI-generated photos. But the more Mazinani worked with the AI, the clearer it became that the technology couldn’t think critically or creatively. The AI was correct about 50 per cent of the time, and when Mazinani gave it the fake plants it had just created and asked it to rank them, the AI could not recognize them as deep fakes.

“AI is a tool, just like any other tool. Even though it's called artificial intelligence, we shouldn’t give it that merit of intelligence,” she says. “It's a sorting mechanism, it's a very sophisticated, complex sorting tool.”

Turning human intuition into robot-friendly rules wasn’t exactly simple. Despite the telltale smudged edges of AI’s handiwork, for example, Khurana and Mazinani couldn’t just teach the AI that blurry means fake, since photographers (and now even phone cameras) often use an out-of-focus background to highlight an in-focus subject. Unable to understand that nuance, the AI instead had to scan each image and determine the percentage of blur, then make its best guess.

The exhibit is still a love letter to human artistry, and to the domestic laborers who shoulder much of the fallout from seismic shifts in technology. Mazinani spent hundreds of hours printing thousands of images, cutting them into the shapes seamstresses use to make clothing, and fastening them to the canvases with more than 5,000 dressmaker pins.

AI art’s bias on full display

AI image generators are creeping further into everyday life — one recently flooded feeds by morphing users into 90s-era high school yearbook photos — but it’s still widely unknown who trains them. Mazinani’s exhibit illustrates that what an AI creates is drastically shaped by the people who tell it how to process data, meaning human biases will become virtual ones. Everything Mazinani’s AI made was an amalgamation of their rules, seen in a gradient of features and colours — they told the AI that oversaturation and high contrast are signs of artificiality (which also had caveats; these are traits of photos taken in bright sun too), and the “real” areas of the canvases are consistently populated by natural yellows and greens, with colours growing florescent the closer they get to “fake.”

That leaves room for societal biases to seep into AI, and Mazinani says there’s cause for concern around AI in search engines, another prolific technology most people use but can’t really explain. As a woman from Iran, she knows a prompt in generated AI for "Iranian woman” pulls up droves of similar images.

“You see generated images of angry faces. Representations of despair and sadness, which by no means a fair way to represent any group of people. These images were categorized by those who have unknowingly produced a technology with a terribly limited understanding of Iranian woman.”

She says this can reinforce stereotyped, vastly oversimplified ideas of cultures, race, people and the world. Her AI’s rigid sorting is an aesthetic nod to that problem.

“What I wanted to do is to use plants to show all the biases. Because if you can see the bias in plants, of course it's going to be in everything else.”