By: Mohammad Anwar

I'm a 4th year CS student taking a networking course, CSCD58, with Professor Ponce. We recently had the opportunity to visit a supercomputer center owned by U of T and operated by SciNet. What follows is a short summary of the trip and what we learned.

Our visit started off by meeting Dr. Daniel Gruner (Danny) who is the CTO of SciNet. He began by telling us about the facility. The facility hosts a couple of supercomputers, the largest of which is called Niagara and hosts around 2000 nodes (~80,000 cores) each with access to over 100GB of RAM. The nodes are connected together through high bandwidth networking cabling.

Running so many nodes uses up a lot of energy and requires a lot of cooling. Danny showed us around the cooling infrastructure that is necessary to keep the facility operational. We got to see huge pumps and all sorts of plumbing and electrical infrastructure. Danny mentioned that the electrical and plumbing infrastructure is the typical culprit when it comes to problems that result in downtime for the facility.

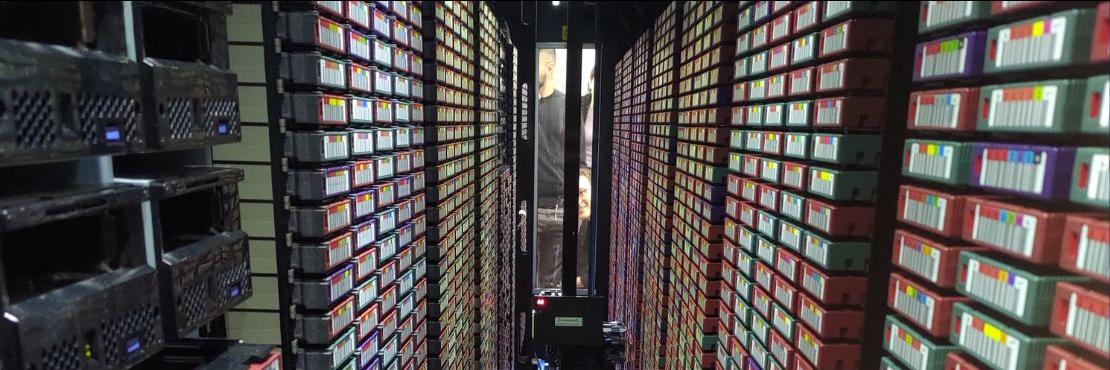

Next, we got to see the supercomputers which were hosted in a large room full of server racks. The room was really loud and cold with a lot of fans spinning furiously to push air through the nodes and keep them cool. We could feel a constant breeze as we walked through the room. Danny picked up the floorboards to show us tonnes of cabling running through the floor responsible for connecting all the nodes together. We also opened up and explored the racks to check out the different types of nodes.

Finally, Danny took the time to answer questions and explain use cases for the supercomputer. SciNet's supercomputers are primarily used for research which largely involves simulating complex scenarios and systems. Researchers have previously used the computer to simulate the inner workings of stars and oceanic ecosystems. Danny emphasized that there is a lot of room for computer scientists to contribute to research and development in the area of supercomputers. In particular, effective use of supercomputers requires employing techniques such as parallel programming to effectively run complex computations at scale. Danny mentioned that many times there is a gap because the researchers who want to research something do not know how to effectively accomplish their aims with the computing tools available to them and this is where computer scientists play an important role.

Overall, the visit was very eye-opening and a great learning experience. I hope that future students also get the opportunity to learn about and become involved with high performance computing and supercomputers!

______________________________

A note from Professor Ponce:

In the context of the CSCD58 “Computer Networks” course we visited one the largest supercomputers available to academic in Canada: “Niagara.”

Niagara is a large cluster composed by 2,024 Lenovo SD530 servers each with 40 Intel "Skylake" cores at 2.4 GHz (1548 nodes) or 40 Intel "CascadeLake" cores at 2.5 GHz (476 nodes). It was the 53rd fastest supercomputer on the TOP500 list of fastest supercomputers in the world in June 2018, and is at number 140 on the current list (June 2022).

Niagara is hosted at the SciNet datacenter together with others supercomputers.

SciNet is the advanced research computing and HPC consortium at the University of Toronto (https://www.scinethpc.ca).

We are grateful for SciNet’s hospitality and tour of their installations, and we hope that in the future more students could participate of these field trips and data center visits.

More pictures from the visit can be seen at: https://cmsweb.utsc.utoronto.ca/marcelo-ponce/events/SciNet_datacenter_visit-2022/